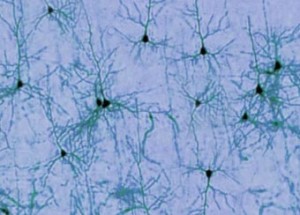

Individual neurons can resonate, groups of neurons can resonate and the entire brain can resonate. This has been known for years, but the idea of resonance as memory storage seems not to have been widely discussed. If you have some citations, I would love to see them!

Artificial neural networks provide us with wonderful simulations of how actual nerves might work in a connected group, but neural networks are still captives of silicon and use ordinary RAM to store their results.

Neural network theory might seem like a rival to resonant memory, but it is designed more for calculating outputs from inputs. Left unstated is how the memory itself is dealt with. The theory discussed here is how resonance might underpin memory storage and retrieval. However, the theory may have more computing power than traditionally associated with simple memory systems. For instance, it makes sense to connect memories when laying them down. Thus, simple retrieval of a given memory may also fetch associated memories automatically. This adds a layer of computing power to simple data retrieval.

Why even consider resonance for memory systems? There are several reasons that are compelling. I’ll list them here and then discuss them in greater detail in subsequent articles.

- Human memory has holographic properties, which may be related to resonance.

- To avoid becoming some creature’s lunch, thinking requires speed.

- A realistic memory model needs to account for how quickly we know we don’t know.

- It is hard to understand how you could suppress resonant circuits in the brain.

- Our thoughts are highly metaphorical, which relates well to resonance.

These are a few of the rough directional musings that stimulate thinking about resonance in animal memory. They are suggestive only, but a good theory should be able to illuminate these issues and hopefully not contradict them.